Images captured by cameras in industrial sites can be used for visual inspection in a variety of use cases, from improved quality assurance to better worker safety or optimised maintenance procedures, among others. In this post we’ll have a look at a quality inspection demo-case, to exemplify one of the newest features of the Waylay platform – using ML models within automation workflows.

The use case: validating quality thresholds for goods on an assembly line, through automated visual inspection

We’re looking at a demo assembly line whose objective is to place 4 screws on wooden boards. The aim of our automated visual inspection solution is to validate the quality of the assembled boards and, for all boards that don’t pass the quality threshold, determine what the problem type is and take appropriate action, without any on-site human involvement.

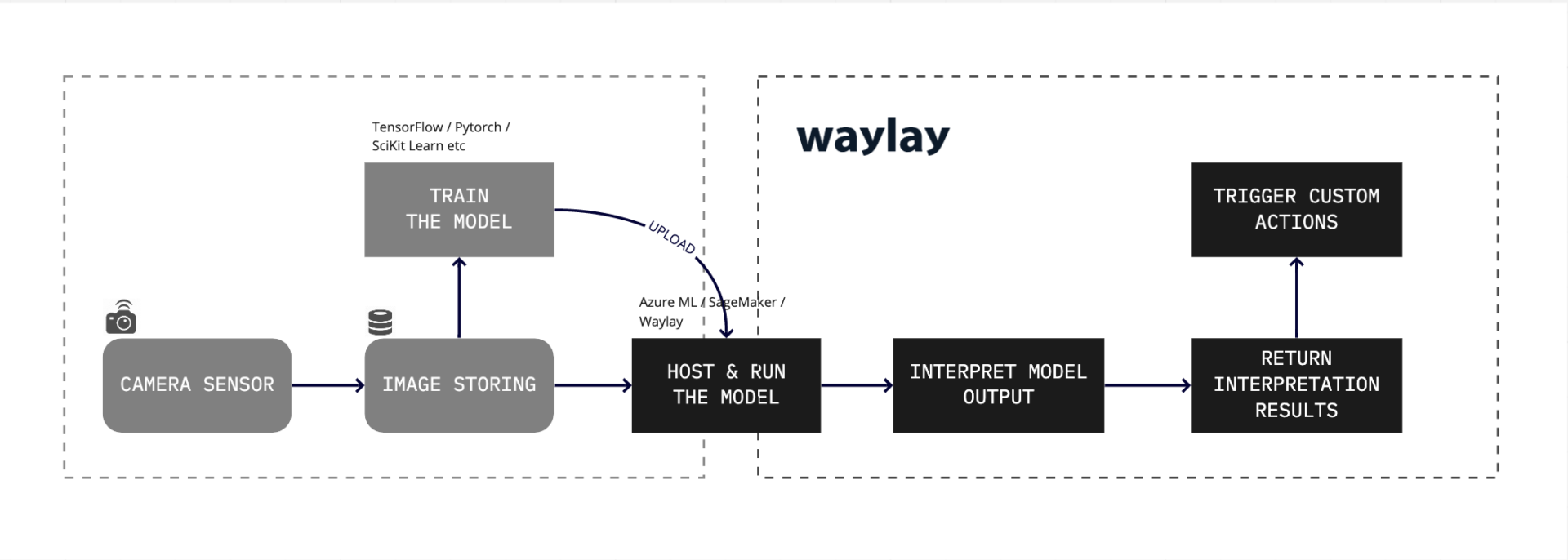

To achieve this aim, these are the main steps:

- capturing and storing image data from internet-connected cameras (for training the model first and for the solution in production later on)

- training an image classification algorithm to detect screws, holes or lack thereof in the assembly line images

- running the model in production to obtain the visual classification results

- matching the results to specific business rules to either validate or invalidate the quality threshold

- using the matched results to trigger different automated actions onto the appropriate business channels

- maintaining the solution and testing, adapting and extending it with minimal effort and resources

The first two steps (capturing and storing the images and training the model) are taken outside of Waylay, by the customer data science teams within their preferred frameworks. The remaining four steps are taken within the Waylay environment.

Training the visual inspection model

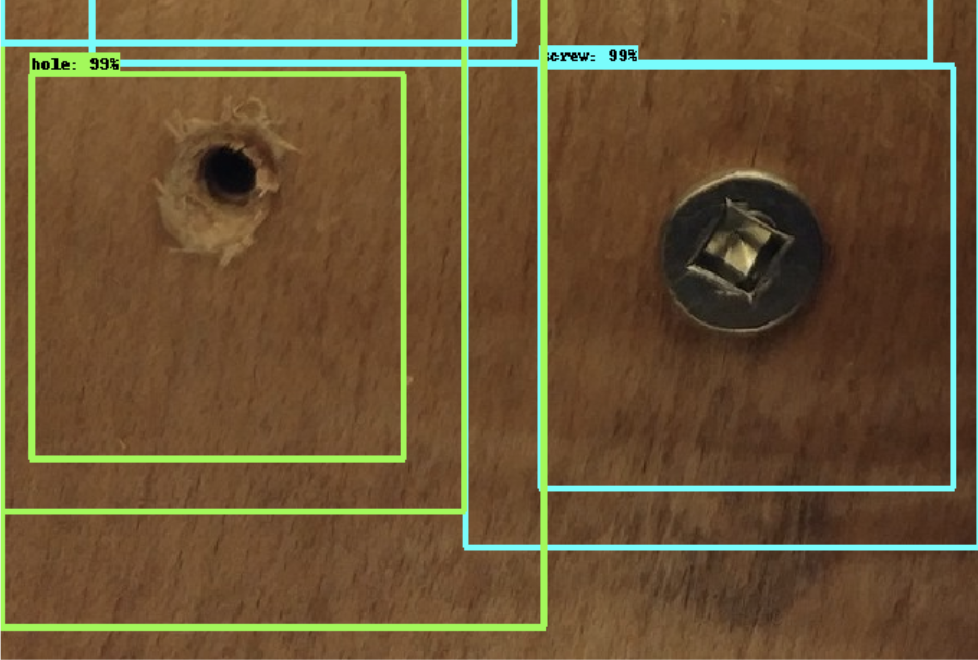

The wooden boards are photographed at a specific camera inspection point set-up along the assembly line. Captured and stored image data is then used to train the object classification ML model. The model needs to do one thing: determine whether the boards have four screws in the correct places. It does this by classifying three types of visual objects inside the image: a screw, a hole and finally something that is neither screw nor hole, assigning probabilities for each.

In our case, the quality threshold is met when visual inspection confirms 4 screws per each wooden board. Any other result (ie: one or more holes, no screws and no holes etc.) is bad news for the owner of the assembly line. And depending on the type of bad news, different alerting or remedy actions need to be taken.

Integrating the ML model within an IoT data automation workflow

Once the model is trained and ready (usually within 14 to 30 days), we can use it within the Waylay platform, to achieve the goals of our use case.

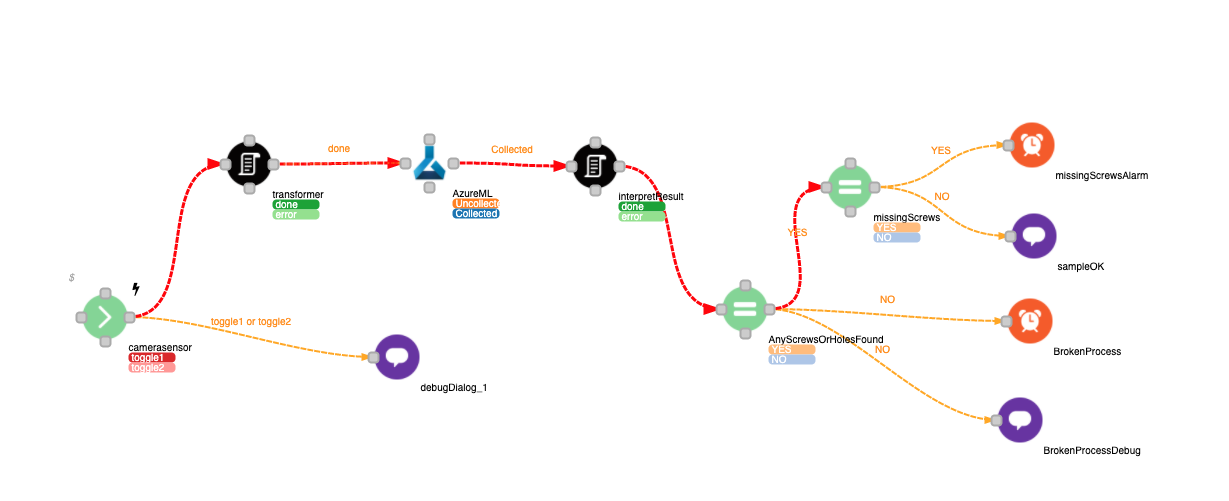

We built a reactive workflow that starts running whenever a new image from the assembly line camera becomes available. The URL of the image location triggers the model to start executing and process the image. In the example shown below, we are interfacing (via REST) with a model that is hosted and runs on Azure ML but it can just as well be AWS SageMaker or any other third party provider. We can also host the model ourselves, in which case we would call it internally from within Waylay.

After the image processing is complete, we receive the results (objects, bounding boxes and probabilities) and interpret them according to the specific business logic of our assembly line.

It’s important to have this interpretation happen outside of the model itself, as decoupling image processing (ie: assigning probabilities to object classes) from interpretation logic (ie: defining the probability threshold for which an object class is accepted) comes with vital benefits in adaptability, flexibility and cost reduction for the end-to-end solution.

For example, let’s assume our demo assembly line owner has two different customers: Customer-A needs 4 screws per wooden board and Customer-B needs 3 screws per wooden board. The owner can use the same algorithm for both, without needing to retrain it or train and host a new one. It will return the same perfect object classification for both, but the interpretation will be different. While 4 screws found for a Customer-A board will pass quality validation, 4 screws found for a Customer-B board will not.

Getting back to our workflow, we see that the interpretation script in this customer case generates two possible states:

- EitherScrewOrHoleFound. If no screws or holes are found, then the assembly process is broken. In the interpretation code, this state corresponds to the fact that none of the bounding boxes have a probability higher than a set threshold, e.g. 99.9%. If either a screw or a hole is found, we move on to check for the next state –

- MissingScrews. If there are missing screws, the machine that places them likely needs a check-up. In the interpretation code, this state corresponds to one or more “hole” object classes found, with a probability higher than the set threshold.

Further on, the workflow continues by assigning values to each of the two states, based on which different actions are set-up to trigger. If “MissingScrews” is evaluated to “No” then the board passes quality validation and is flagged as such. If it evaluates to “Yes” then an alert is sent to an operator, suggesting to check the screw placing machine.

Main benefits of automating visual inspection with Waylay

It’s important to note that this workflow enables both quality validation and root cause analysis, helping our demo assembly-line owner to:

- accelerate and streamline the quality validation process by completely automating it

- improve fault response times by setting up automated alerts to designated support teams

- optimise machine health and maintenance by catching early wear & tear

- empower field support teams with actionable fault diagnostic analysis

- improve the overall assembly process through increased real-time process visibility

- minimise the risk to worker safety through remote-first operations

- liberate human resources by replacing human visual inspection with cameras and software

Here is the video demonstration of how we set-up our automated quality inspection solution in Waylay.

Learn more

For a more in-depth technical overview of our entire range of capabilities in ML operations, we encourage you to watch our on-demand webinar or visit the technical documentation site.